Welcome to our Adobe Max 2022 liveblog, where we’ll be covering all of the big announcements from today’s festival of creative apps. Whether you’re a fan of Photoshop, Lightroom, Premiere Pro, or just seeing the future of creative digital tools, Max is always a fun ride – and we’ll be rounding up all of the news here as it happens.

What exactly is Adobe Max? The software giant calls its LA-based event a ‘Creativity Conference’, but it’s really an excuse for Adobe to unleash a confetti blast of announcements across its huge suite of Creative Cloud apps. Think fancy, AI-powered Lightroom tricks, new launches like Photoshop on the Web, and lots of future-gazing demos around machine learning, AR and VR.

Even if you aren’t a hardcore Adobe fan, the Max conference is always a good sneak peak at the cutting-edge creative tools that are coming down its expertly-rendered pipeline. Given the increasingly hot competition Adobe is facing right now, from Google’s AI editing tools to machine-learning wizards like Dall-E, this year’s conference should be particularly fascinating.

The live virtual keynote doesn’t kick off until 9am PDT / 5pm BST (or Wednesday 19th at 2am AEST), but Adobe has historically made some big announcements in the run-up to its live presentation – and we’re expecting the same again this year.

So join us as we discuss everything we’re hoping to see and what the new updates for Photoshop, Lightroom, Premiere Pro, Illustrator, InDesign could mean for your creative noodlings, whether you’re a Creative Cloud subscriber or not.

Hello, I’m Mark (TechRadar’s cameras editor), welcome to our Adobe Max 2022 liveblog. Adobe’s ‘Creativity Conference’ has already kicked off in LA, but today is the big day for the software giant – and anyone who uses its dozens of apps.

From 9am PDT / 5pm BST (or 2am AEST on Wednesday 19th), Adobe will be streaming its two-hour keynote, which will give us a glimpse of the new treats coming to software like Photoshop, Lightroom, Premiere Pro and more.

But in the run-up to that big reveal, we’re expecting to see Adobe make some teaser announcements around the things that it’ll be fully unwrapping later. So if you want to know what we’re hoping to see at Adobe Max – and our early thoughts on the news as its happens – stay tuned to this regularly-updated liveblog. We even promise not to mention the metaverse (well, we’ll try).

So what exactly are we expecting to see at Adobe Max 2022? I’m particularly fascinated by this year’s event because there are so many rivals apparently eating Adobe’s lunch, or at least stealing a few of its fries.

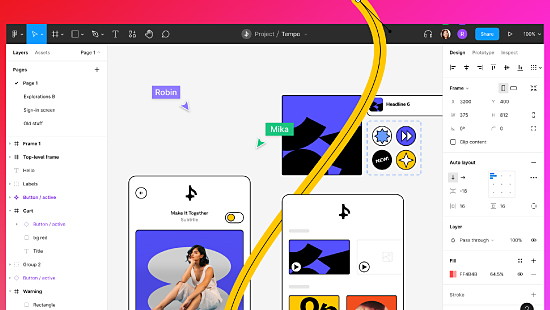

Firstly, there’s the increasingly popular Canva, a free graphic design tool that’s expanding into new areas like video editing. It’s one of the main reasons why Adobe splurged $20bn on Figma last month (that’s more than Facebook paid for WhatsApp in 2014).

Then there’s the text-to-image creators like Dall-E and Midjourney, not to mention Google building AI photo editing into its Pixel phones. So with all of this in mind, I’ve put together the top five things I’m hoping Adobe to see announce or talk about during the Max 2022 keynote later.

Prediction #1: Virtual clay on Meta Quest Pro

Here’s something that went a little under the radar during the Meta Connect event last week. Skip to the 42:30 mark in Meta’s keynote and you’ll see Mark Zuckerberg announce that Adobe is making creative apps for the new Meta Quest Pro mixed-reality headset.

Referring to Adobe, Zuckerberg said “next year, they’ll begin releasing a suite of apps for professional 3D creators, designers and artists – from collaborative design reviews to Substance 3D Modeler using Quest Pro’s controllers.”

The latter is something I reckon we’ll hear a lot more about at Adobe Max. The sculpting software is already in beta and lets you muck about with digital clay. That actually sounds custom-made for VR/AR headsets, unlike work meetings with weird legless avatars.

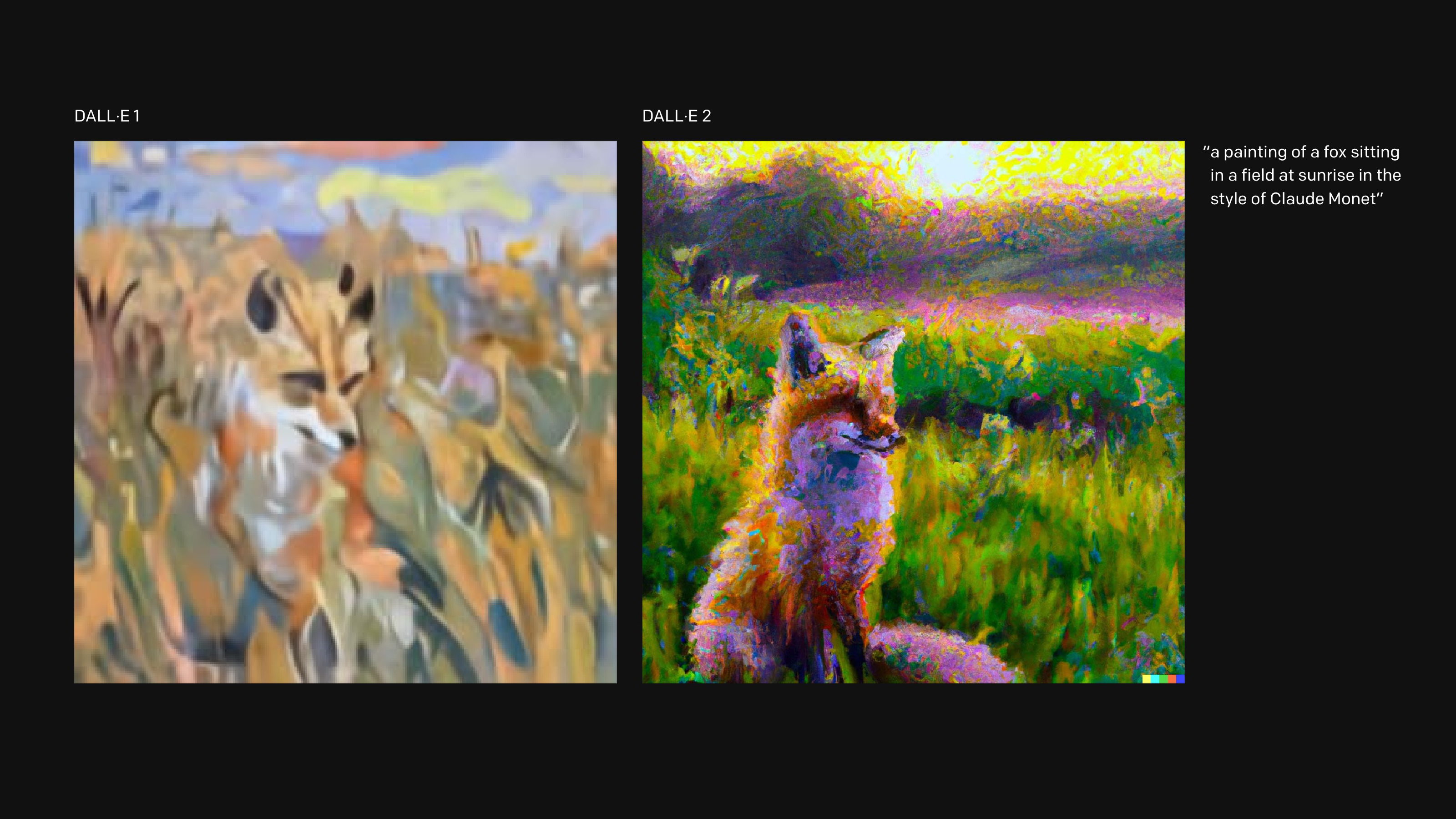

Prediction #2: Adobe’s response to Dall-E 2

I’m both excited and slightly terrified by the emergence of text-to-image creators like Dall-E 2 and Midjourney. These AI image generators seem to be improving on a daily basis and it feels like Adobe needs to respond. After all, if the average person can just type some text and instantly receive world-class illustrations or images, why bother with Adobe apps like Photoshop and Illustrator?

Of course, the likes of Dall-E are new tools, rather than replacements for human creativity. But professionals are already using Dall-E and Midjourney in their workflows, so it’s a space Adobe can’t afford to ignore. But what does it have up its AI-generated sleeves? Hopefully we’ll get a peek later.

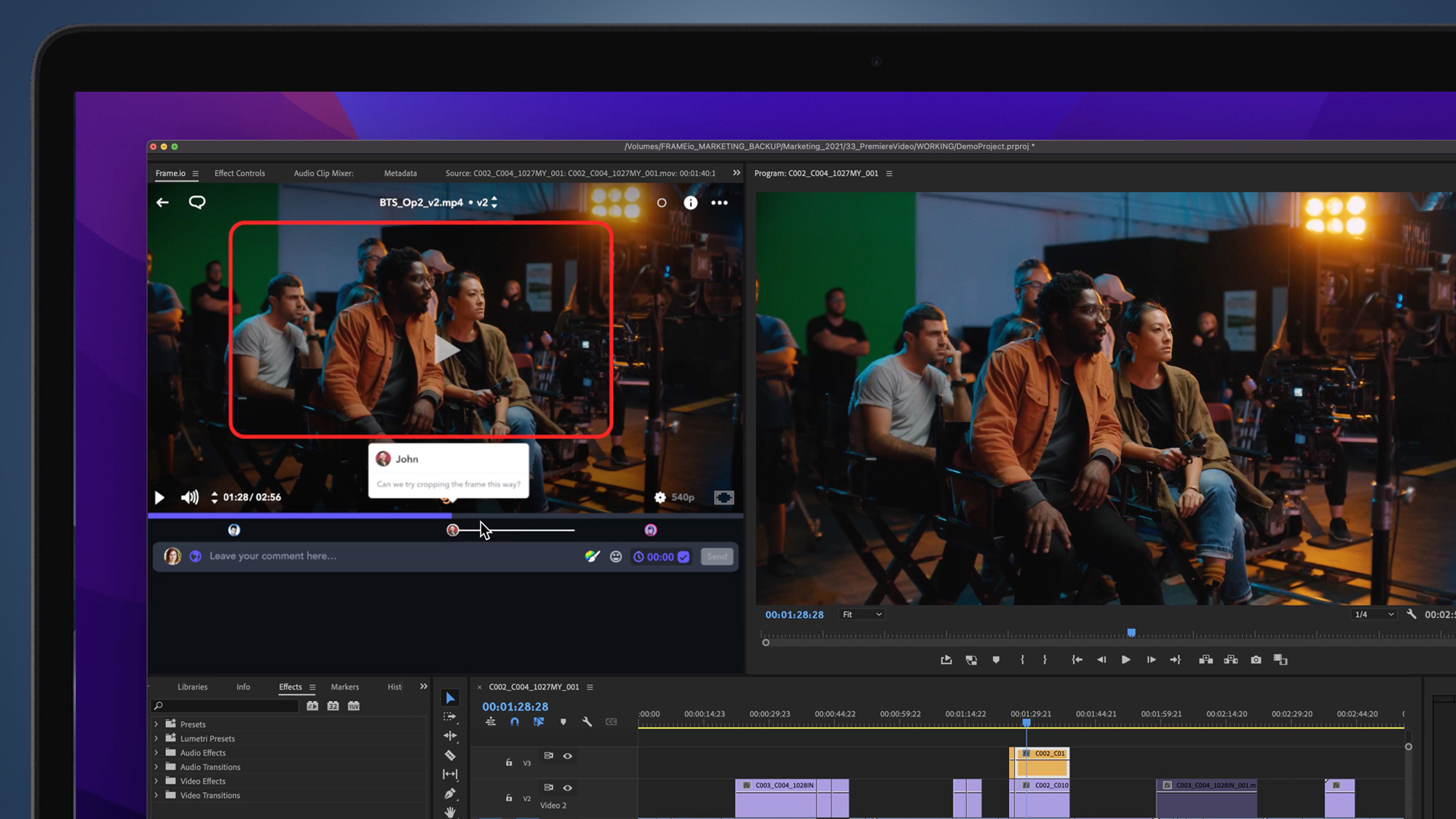

Prediction #3: The expansion of Frame.io

Just over a year ago, Adobe splurged $1.3bn on a video software service called Frame.io, which lets creative teams upload, review and approve video footage remotely in the cloud.

That service has since plugged neatly into Adobe’s Premiere Pro and After Effects software, with Creative Cloud subscribers getting 100GB of free storage. But where will it go next?

Adobe is surely looking to expand its powers, given it doesn’t do a lot more than the original plug-in. Hopefully we’ll see it properly realize its potential as a super-streamlined video editing platform at Adobe Max 2022.

Prediction #4: More AI smarts for Lightroom and Photoshop

I’ve been pretty bowled over by the development of Adobe’s masking tools in the past few years. If the past couple of Adobe Max conferences are anything to go by, we should see them go up a notch in Lightroom and Photoshop today.

I’m already very reliant on Lightroom’s ability to automatically select a photo’s subject or the sky, then let me refine that selection with a graduated filter. But there’s room for Adobe to go even more granular here, perhaps automatically picking the specific parts of someone’s face to edit.

Or perhaps Adobe Sensei could learn to fetch me a beer while I spend more time faffing about with the color wheel.

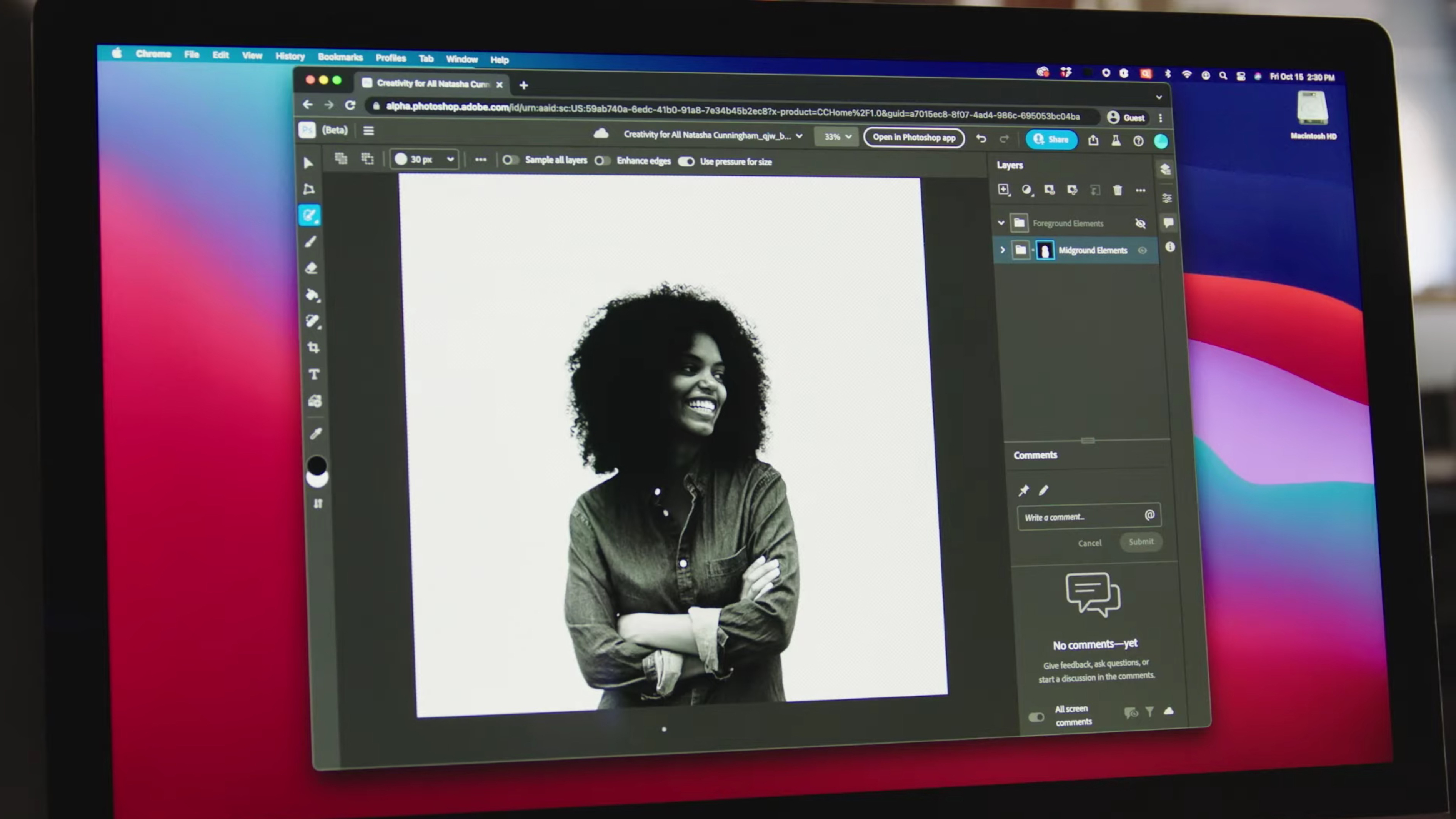

Prediction #5: ‘Photoshop on the web’ goes free for all?

This time last year I was recovering from falling off my chair at the news that Adobe had finally created a web-based version of Photoshop. The awkwardly named ‘Photoshop on the web’ turned out to be less of a Photopea-killer, and more just a way for existing Creative Cloud subscribers to collaborate on Photoshop files.

But earlier this year The Verge spotted that Adobe was testing a free-to-use version in Canada, and we’ve since seen the service expand to become more of a standalone version of the image editor.

Could Adobe go the whole hog and announce ‘Photoshop on the web’ as fully free for everyone? It’d certainly be a bold, and very welcome, statement.

One more thing…

There is one bonus thing I’d like to see at Adobe Max 2022 (beyond an Oprah-style giveaway of Creative Cloud for anyone watching the livestream). For a while, Adobe’s been promising us a universal camera app that might act as a next-gen version of Photoshop Camera.

CNET recently spoke to Marc Levoy (formerly of Google, now an Adobe VP), who said that Adobe’s new app will be for “photographers who want to think a little bit more intently about the photograph that they’re taking and are willing to interact a bit more with the camera while they’re taking it”.

Specific details are thin on the ground, but Levoy did say Adobe’s working on a “feature to remove distracting reflections from photos taken through windows”, among other tricks. The app will apparently be here in the “next year or two”, so could we get a preview at Adobe Max 2022? I hope so, because it sounds unlike any photo editing or camera apps that are out there right now.

So, how exactly can you tune into Adobe Max 2022 later? Like last year, there’s a YouTube livestream that’s free for all to tune into – it’s above and the keynote kicks off at 9am PDT / 5pm BST (or 2am AEST on Wednesday 19th for those in the southern hemisphere).

The keynote’s scheduled to go on for two hours, so expect a barrage of Adobe-related information. But if Adobe Max 2022 is anything like previous years, we’re expecting to see some news break well before the keynote starts.

Adobe’s Chief Product Officer, Scott Belsky, has shared a little behind-the-scenes of the Adobe Max livestream setup. Confirmed: squirrels will be making an appearance, as will a “glimpse into the future”.

There have been some pretty big moments at Adobe Max in the past few years – Photoshop coming to the iPad, the arrival of web-based versions of Illustrator and Photoshop – will we get anything this big at Adobe Max 2022? We’ll find out very soon.

backstage with the team today in prep for #AdobeMAX tomorrow – showcasing the latest and greatest across each creative category, and a glimpse into the future as well. https://t.co/jlJ0uDEzmy to join us… 😎 pic.twitter.com/CzATifKR9gOctober 17, 2022

The recent launch of Photoshop Elements and Premiere Elements 2023 gave us a good taste of what might be come at Adobe Max 2022 for its full, subscription-based versions.

Those stripped-down, one-off purchases are all about AI-powered features, including auto-reframing and Guided Edits. Outside of its neural filters, Adobe’s main use of machine learning has been to speed up the editing process for creators at all levels.

That’ll likely be a big theme at Adobe Max 2022 and that’s fine by me – more time to develop my creative vision* (*read snacks and mindless Twitter scrolling).

As a photographer, there’s no doubt that today’s expected Lightroom and Photoshop updates will make the most difference to my life – but the thing I’m most interested in seeing at Adobe Max 2022 is Adobe’s vision for VR and mixed reality headsets.

I’m yet to be convinced about a lot of the applications we’ve seen for VR/AR headsets so far, particularly virtual work meetings with legless cartoon avatars. I just don’t see that being the future, or a future I want to be part of.

But the potential for creative applications, particularly 3D modeling, is massive – and having Adobe supplying the software for the gradually improving hardware could give it the shot in the arm it needs. It also feels like another crossroads moment for Adobe, like its move to a subscription, cloud-based models a decade or so ago.

Okay, so Adobe’s just fired a confetti gun of Max announcements ahead of the livestream later – and there are some big headlines among the many updates. Let’s round up the big news that’s just dropped.

Adobe Max news #1: Adobe goes big on VR/AR with Meta Quest apps

As Mark Zuckerberg hinted at the Meta Connect event last week, Adobe’s going all-in on the potential of mixed reality headsets – and it’s just announced a new 3D Modeler app, which lets you build things with your hands in virtual reality without needing a computer (or switching between your PC and a headset).

Adobe already has its desktop Substance 3D Modeler app, which is in beta for Windows users, so we’re looking forward to seeing this in action during the keynote later. But the video above gives us a good taste, and it looks a lot more interesting than Adobe’s PDF document viewing app (also coming to Meta headsets).

Adobe Max news #2: The Fujifilm X-H2S and two RED cameras can send photos or video directly to Adobe’s cloud

Here’s an unexpected but welcome development for creatives who use Adobe’s Frame.io to share their video and photo assets among teams – the service’s Camera to Cloud (C2C) function will soon work in-camera on the Fujifilm X-H2S, RED V-Raptor and RED V-Rapot XL.

That could be a particularly big deal for the Fujifilm X-H2S, as it becomes the first digital stills camera to natively work with Frame.io’s Camera to Cloud service. In theory, that means being able to share raw photos with a wider team based anywhere in the world, and get their retouches and annotations – without any cables or computer.

You’ll need some very reliable, wide-bandwidth broadband to handle share video in this way, but it’s a taste of a much simpler future that’s been a long time coming. The firmware update for the X-H2S is expected to arrive in Spring 2023.

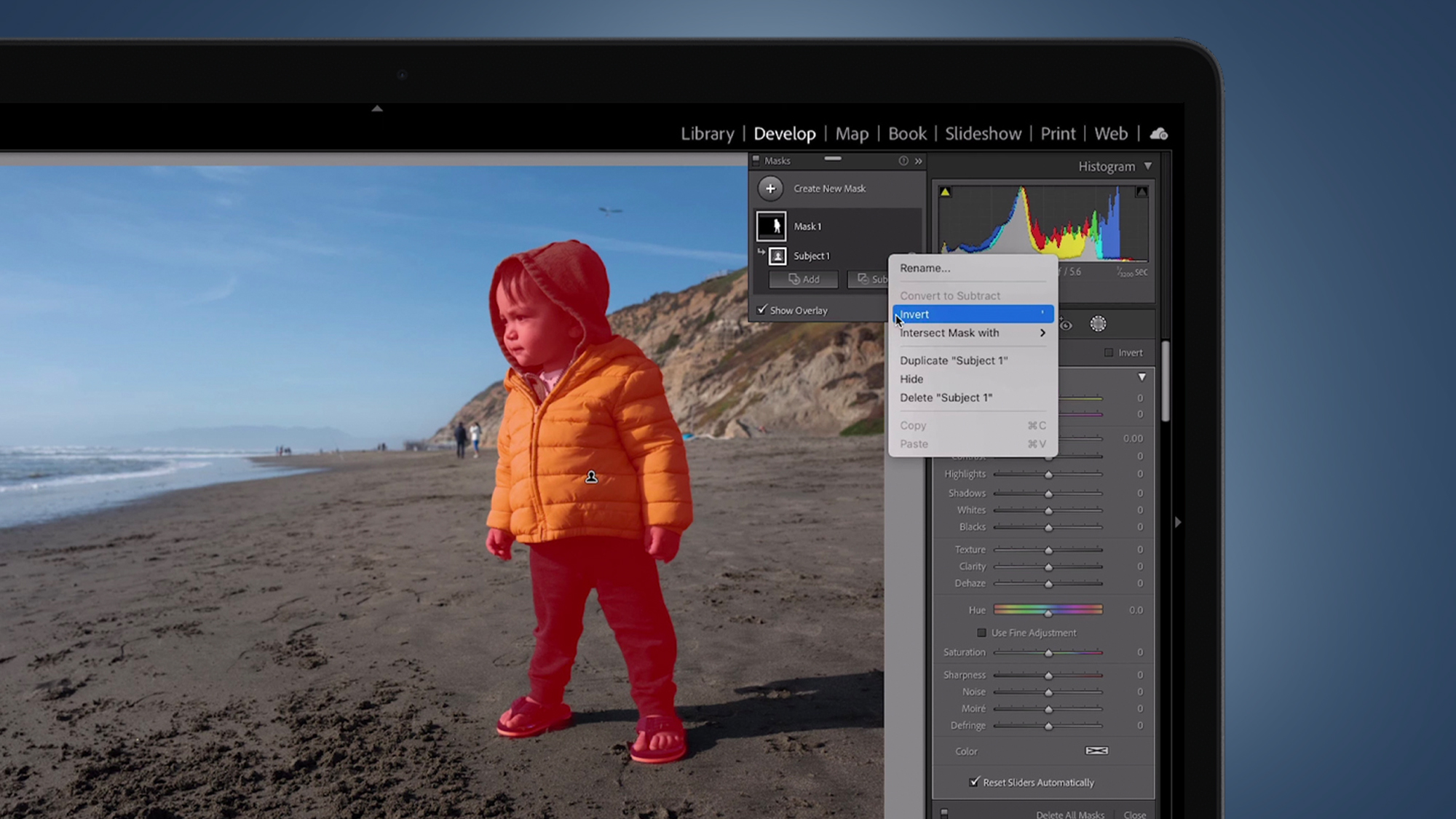

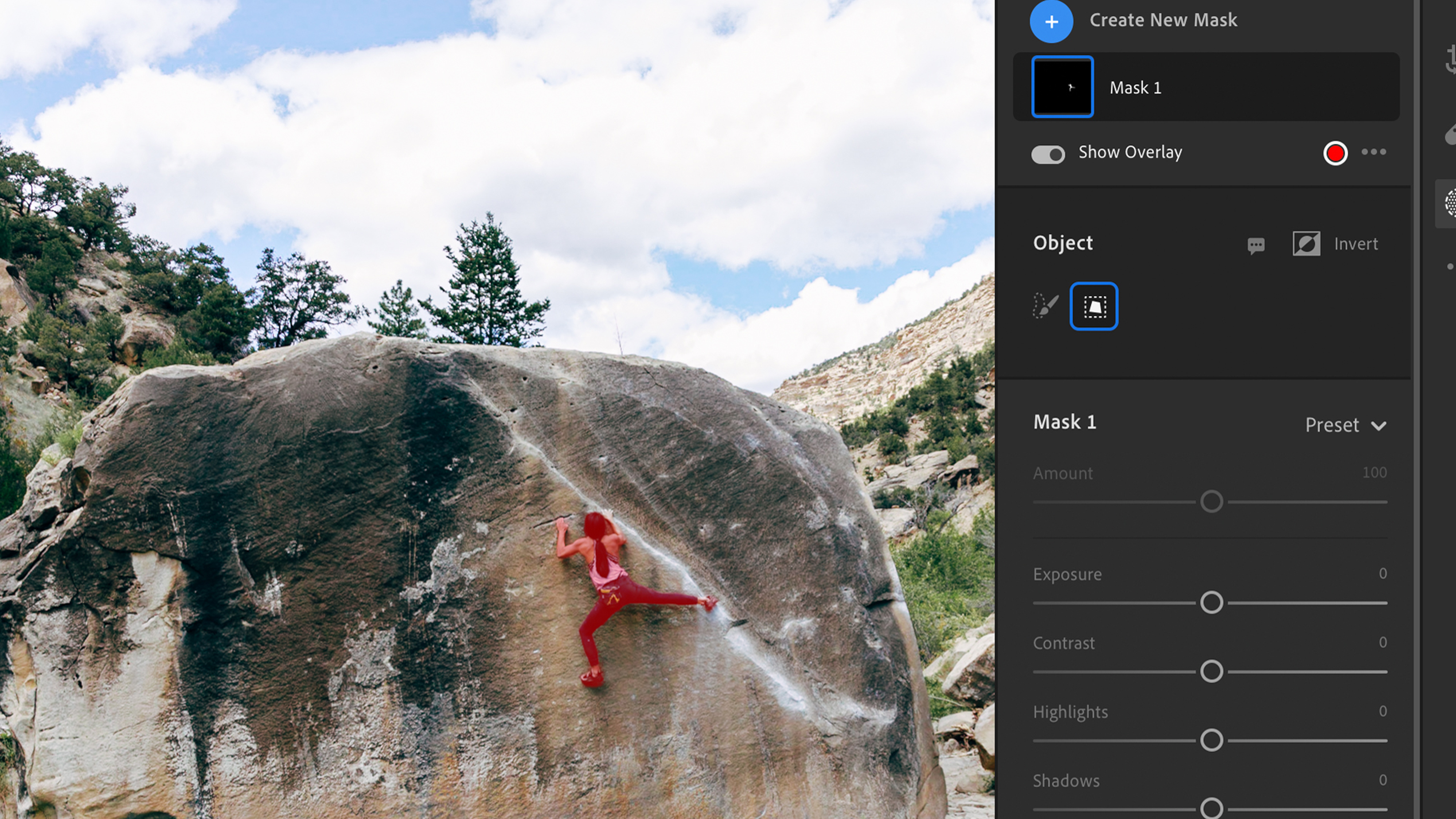

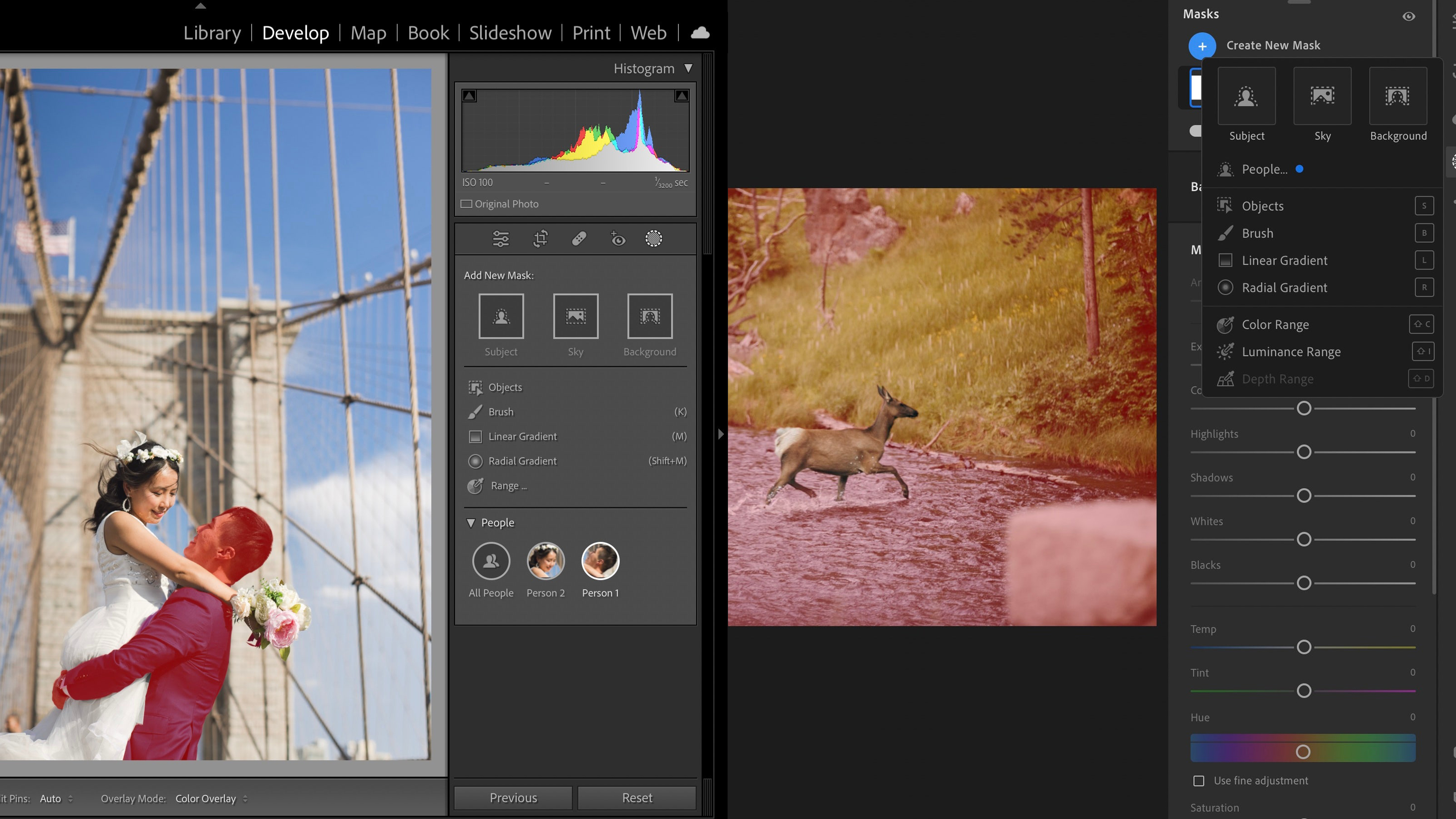

Adobe Max news #3: Better AI masks for Photoshop and Lightroom

In the past, one of the most time-consuming tasks for photo retouchers was selecting the right part of an image for local adjustments – but Adobe’s just made that even easier with some updated tools for both Photoshop and Lightroom.

Photoshop’s Object Selection tool, and Lightroom’s similar ‘Select People’ and ‘Select Object’ masks, now have much more granular smarts. The former can now recognize objects and regions like sky, buildings, water, plants, different types of ground.

Lightroom’s incoming update will also make it much more powerful for portrait shooters, thanks to its ability to select specific body parts like face skin, body skin, eyes, teeth, lips and hair. All of this means less wasted time in the editing process, and we’ll raise a perfectly edited glass to that.

Those are the big headlines from Adobe Max 2022 so far, but there are also a ton of new updates for Premiere Pro, After Effects, Illustrator, Creative Cloud and more, which we’ll be digging into soon.

The future-gazing part of my brain keeps coming back to those new 3D modeling tools for VR/AR headsets, though. The Substance 3D Modeler app, which is in beta for Windows, lets you switch between the desktop and VR versions of your work. The ability to precisely mould virtual clay also just looks like a ton of fun, beyond the more serious possibilities for prototyping and game design.

Adobe’s new 3D Capture tool also lets you turn real-world objects into 3D models from a series of photos, a process known as photogrammetry. New virtual worlds are going to get built pretty fast with this stuff – let’s hope there are plenty of places to hide from cartoon avatars of Mark Zuckerberg.

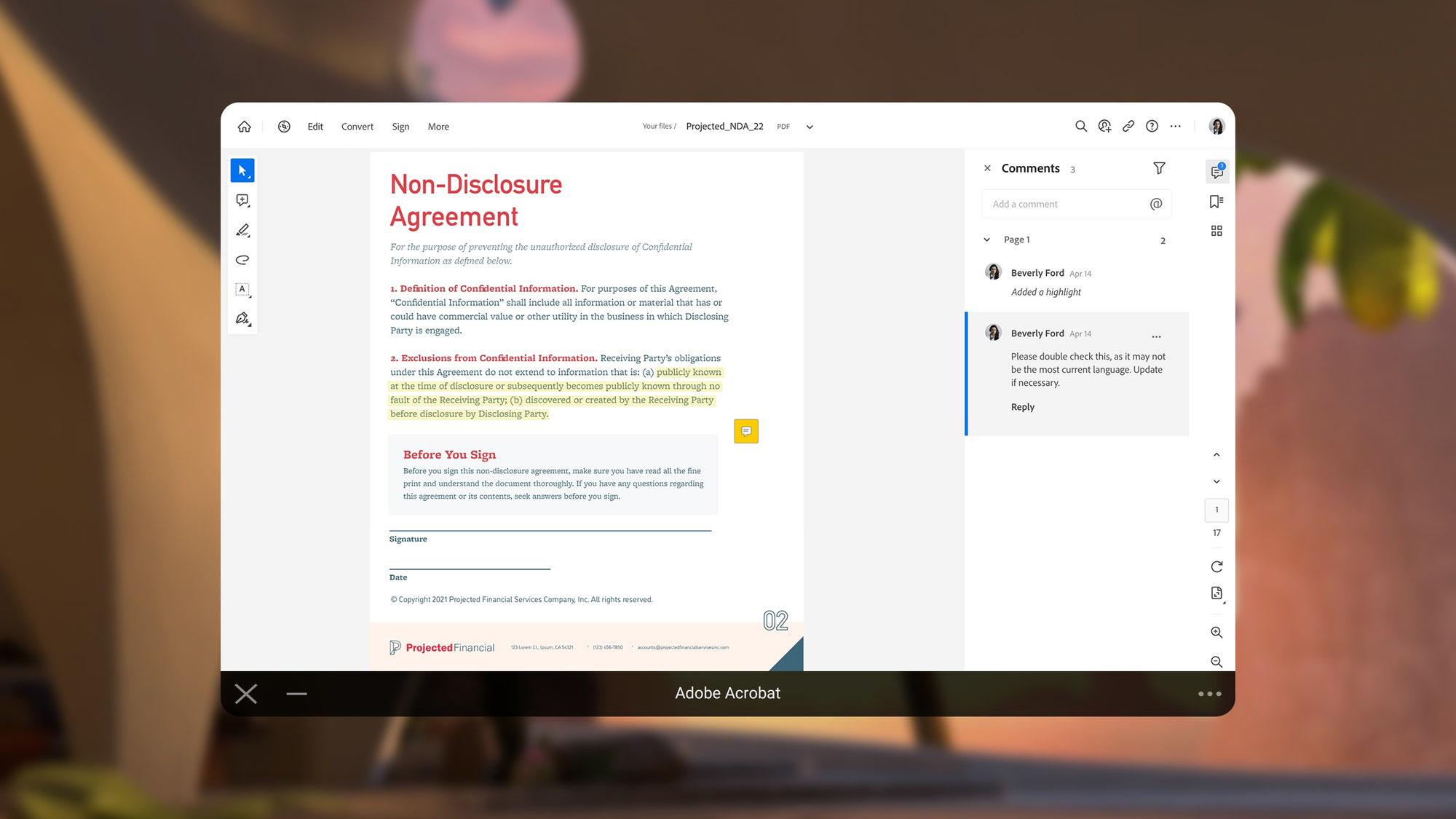

VR has struggled to find its true calling, but now it’s here… signing non-disclosure agreements in the metaverse! Yes, the Adobe Acrobat app is now live in the Meta Quest Store, and we just immediately dropped Beta Saber mid-game to embrace this new PDF experience.

Okay, let’s revisit some of those updates to Lightroom and Photoshop. As a big Lightroom user, I’m particularly looking to those new masking tools, which will apparently be “available for everyone over the course of the week”.

Lightroom (both the standard and Classic versions) will now be able to Select People, Select Objects and do a one-click Select Background. That last one could save me precious micro-seconds compared to inverting a ‘select subject’ mask.

The ‘Select People’ mask sounds particularly powerful for portrait shooters – you can apparently select specific body parts like face skin, body skin, eyes, teeth, lips and hair. These are more extensions of existing tools, but all of them will make choosing the parts of your photo that you want to edit a lot quicker.

The latest After Effects has dropped. To absolutely nobody’s surprise, the update’s centered around creating more efficient workflows

Adobe’s VFX software now (finally) features H.264 encoding, so you can export straight from the render queue and composition presets. And the tool’s teeming with more 50 new animation presets.

To sweeten the deal, Adobe reckons you’ll even see 200% faster template performance in Premiere Pro.

Right, after that blast of Adobe Max 2022 news, I’m signing off and handing you over to TechRadar Pro’s Steve Clark for the run-in to Adobe’s keynote.

That livestream kicks off in just over 90 minutes, and as we approach that we’ll be highlighting our pick of the other news and updates we’ve seen rain down on Adobe fans today.

Adobe is partnering with Wix. The plan is to integrate the ultra-modular Express Embed SDK into the popular website builder to boost creativity and simplify workflows.

According to Yaniv Vakrat, Chief Business Officer at Wix, the firm will “explore ways in which we can enable our users to use Adobe Express capabilities to enhance the image editing process in our Wix Media Manager.”

We already know Adobe’s PDF editor is headed to the metaverse – but, like Photoshop, Adobe Acrobat is expanding on the web, too.

Look out for the new Quick Actions toolbar and Discover panel for finding what you need easier than before. The company has also rejigged the Edit, Convert, and Sign tools based on how you use them (“purpose-driven categories”, as Adobe puts it).

And great news – it’s also enhanced key accessibility features like the read-out-loud tool.

We’ve got some Adobe Sensei-powered enhancements for Premiere Pro and After Effects to look forward to.

In the video editing software, Auto Color amps up color corrections by intelligently applying adjustments. On the audio side, Remix – already a familiar feature – cleverly retimes music to match your video clips.

Over in After Effects, Scene Edit Detection is also getting a boost from the company’s ubiquitous artificial intelligence. The tool can automatically detect scene changes. You can then turn each scene into a separate layer or add markers at edit points.

10 minutes until showtime.

Expect plenty of mentions of productivity, creativity, and online collaboration. And more on the metaverse and the rather exciting Adobe Substance 3D Modeler.

You can watch Adobe Max online live and for free in the video above.

This is an interesting one. According to NVIDIA, Premiere Pro is getting RTX acceleration, creating major performance boosts for AI effects.

In its latest blog post, it says, “For example, the Unsharp Mask filter will see an increase of 4.5x, and the Posterize Time effect of over 2x compared to running them on a CPU (performance measured on RTX 3090 Ti and Intel i9 12900K).”

You’ll still need a decent video editing computer, but it’s a great step towards our inevitable AI-backed future.

And we’re live – at last.

Kicking things off, Shantanu Narayen, Adobe Chairman and CEO, takes to the stage to welcome us all. And to highlight some massive Adobe milestones, including the company’s 40th birthday coming this December.

“Powerful, accessible, and fun,” is how Narayen describes Adobe’s products. Hard to disagree with that assessment, given today’s announcements.

Accessible is key here. Striving to do social good, Adobe Express is now available free to non-profits around the world.

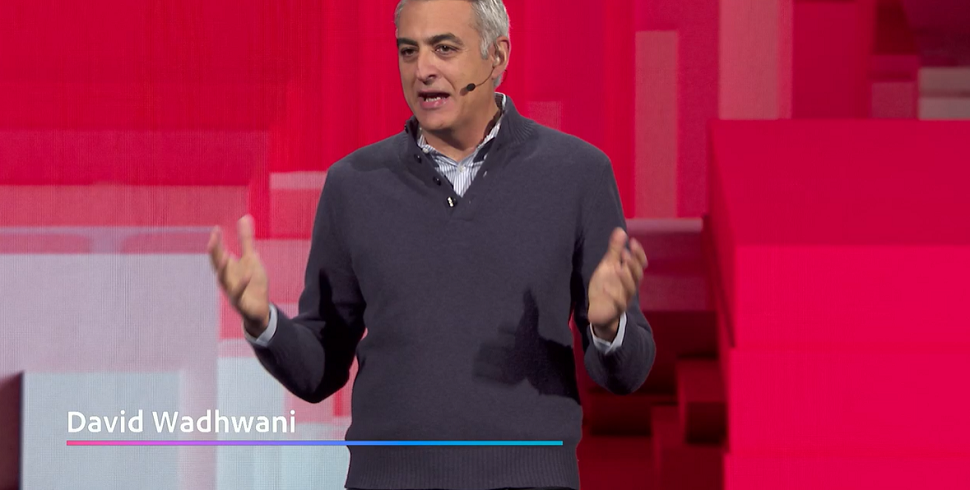

After a wild celebration of creatives across the entertainment industry, David Wadhwani, President of Adobe’s Digital Media Business, is up to wax poetic about serving inspiring content creators.

“Content is fuelling the global economy,” he announces, before focusing on Adobe Sensei’s power to transform productivity and possibility.

To applause, Wadhwani makes a commitment to use AI to enhance, not replace creators and build standards around ethical use of artificial intelligence.

Collaboration and productivity take center stage courtesy of Scott Belsky, Chief Product Officer and Executive Vice President at Adobe Creative Cloud.

Another plug for Adobe Express – they’re going in hard for the platform. A response to the increasing popularity of graphic design tools like Canva?

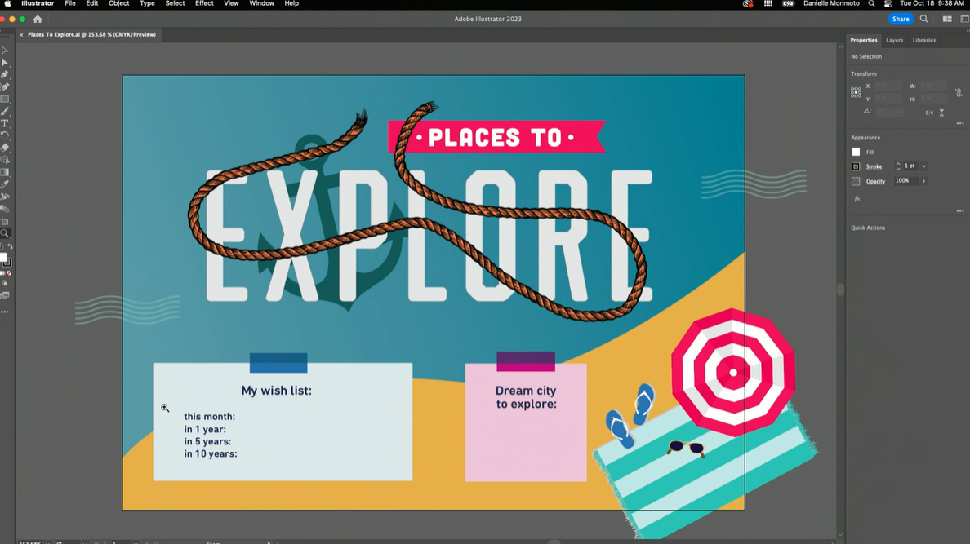

It’s followed by a demo of the new Share for Review tool in Illustrator and InDesign – which now supports a host of modern formats; no conversion needed.

A very well-received demo of the new InDesign style packs and Illustrator’s incredible intertwine tool to add depth and dimension to designs. No more copying and masking little by little. Just lasso the parts you want to hide and voila.

It’s followed by an in-depth look at how Share for Review works. This is available in the latest version of Adobe’s DTP software and the digital art software. It’s rolling out to other products “soon enough.”

More Adobe Express – this time exploring the AI integration and the remove background quick action.

We’re then treated to a demo of Express’s social media scheduler. This is nothing new – it’s been around since May this year. But it’s a chance to show how simple it is to use.

It’s all about “making common tasks easy,” we’re told – particularly for marketing teams.

Another cool Express feature gets an outing: AI generated fonts.

Create a unique font using AI. Start with any stock font – then write a descriptor, for example flowers. Adobe Sensei will generate a font that’s unique to your text suggestion.

On to the latest updates to Photoshop.

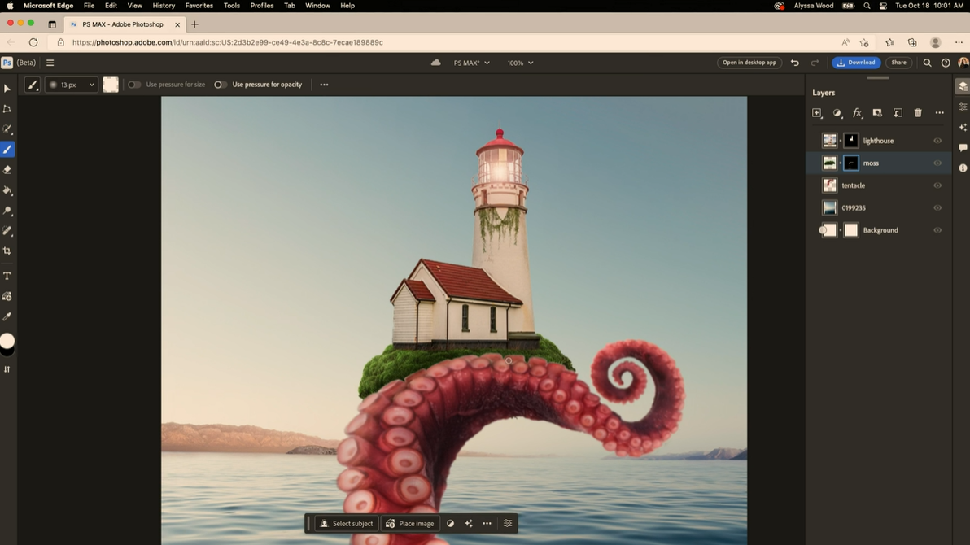

Alyssa Wood, Design Manager at Photoshop, kicks off with the beta of Photoshop on the web – and the online photo editor includes plenty of “familiar faces” from the desktop version.

To the bottom of the screen, we see a contextual taskbar of suggested next steps that changes depending on what you’re doing.

Once you’ve made smaller tweaks online, you can open the image in the desktop photo editor. It all seems very seamless, as you’d expect from the super-integrated Adobe toolstack. Wood then demos editable gradients for more precise results.

And yes, the beta Share for Review is available here in Photoshop, too. Collaboration is king.

Sure as night follows day, Photoshop is followed by Lightroom.

But after a unique and inspiring Lightroom use-case from Ghanian photographer Michael Aboya, Adobe focuses on the Content Authentication Initiative – a way to help combat misinformation and misattribution to digital images.

And the big reveal: Leica and Nikon are implementing digital provenance tech into the Leica M11 Rangerfinder and Nikon’s Z9 mirrorless camera.

More efficient workflows incoming. New to Premiere Pro is Media Replacement with motion graphic templates. Just drag and drop it onto the timeline to re-use effects. No more tweaking one element, then fixing issues that arise across other tracks as a result.

The excitable Jason Levine, Principal Worldwide Evangelist for Adobe, goes on to show off some more cool video editing tools. As part of the Share for Review roll-out, collaborators can draw, paint, and highlight specific elements on video frames, and leave comments on the video player. And it’s all frame-accurate

Feedback can be viewed right on the timeline (so no tabbing back and forth between apps to see what needs fixing or reviewing).

Frame.io’s “revolutionary” Camera to Cloud tool is in the spotlight. As we detailed earlier, it’s confirmed that C2C upload capabilities will be natively integrated into the RED V-Rapot XL with no additional hardware needed.

It all works quite smoothly. Upload from the camera, input the six-digit code on your phone and select the destination folder, and the hardware does the rest.

If you were wondering how Coca-Cola uses Adobe collaboration tools, then wonder no more. It allows for diverse perspectives, keeps them evolving their designs, and the whole workflow. The integrated toolstack delivers a “universal language” – literally and figuratively.

Figma up now – the recently acquired UI, prototyping, and web development tool Adobe picked up for a cool $20bn.

And the focus is on making the product design process accessible to everyone, particularly during the brainstorming stage.

Dylan Field, co-founder of Figma, hopes with Adobe’s backing, the team can iterate and update faster, with more powerful tools. Adobe Fonts is top of the wishlist. But after it’s Adobe Max reveal, Field says that intertwine tool is looking pretty attractive, too.

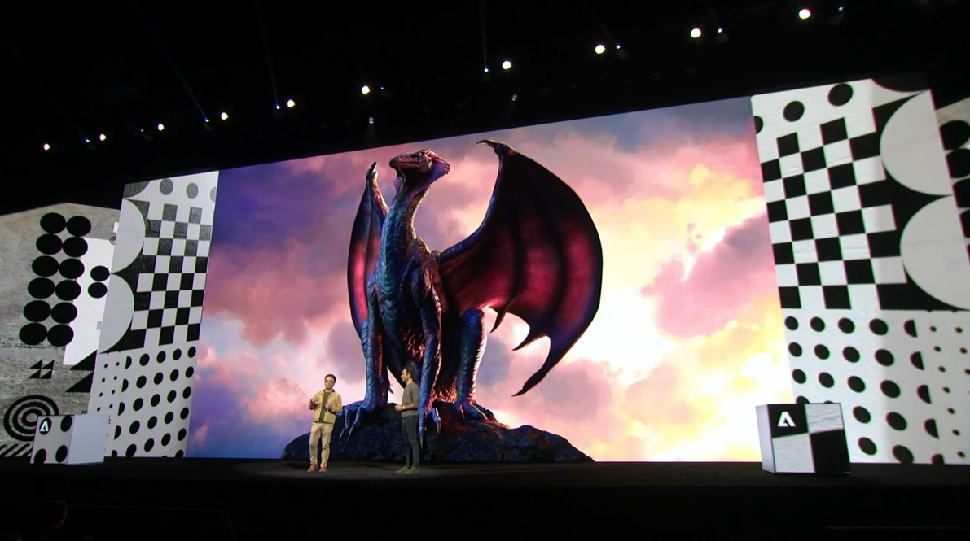

And now for Adobe Substance 3D Modeler – the VR-based 3D modeling software that lets you create pretty much anything in digital clay.

It’s faster and more natural than using Substance on desktop, according to Gio Nakpil, Senior Design Evangelist and Creative Director at Adobe.

It’s available now for desktop and works with Meta headsets.

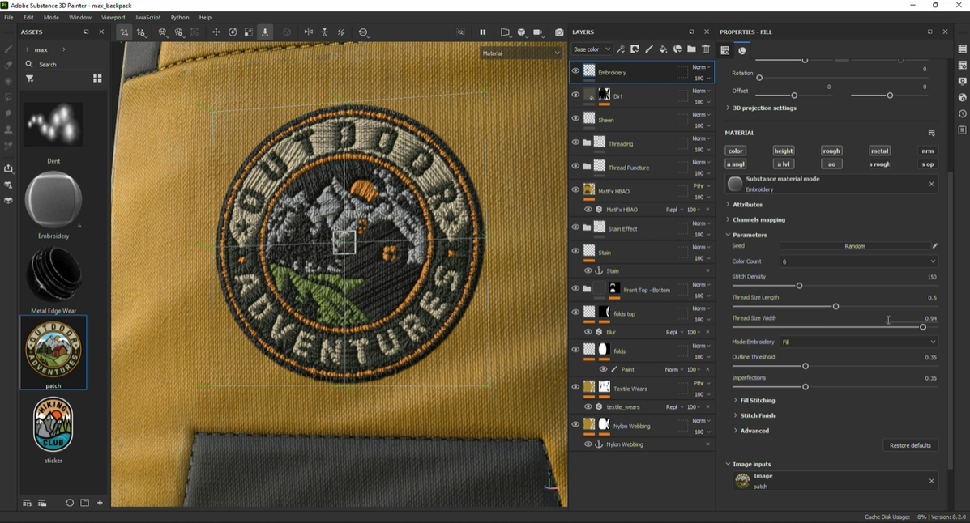

A very clever demo follows, showing how simple it is to take content created in Photoshop and Illustrator, and turn it into a 3D asset in Substance Painter.

You can then pop that creation into Substance Stager for compositing, finalizing, and rendering. This third tool uses “machine learning magic” – we assume this isn’t a technical term.