Adobe has revealed its answer to AI art generators like Midjourney, Dall-E, and Stable Diffusion – and the new family of generative AI tools, collectively called Adobe Firefly, could ultimately be as influential as the original Photoshop was in 1990.

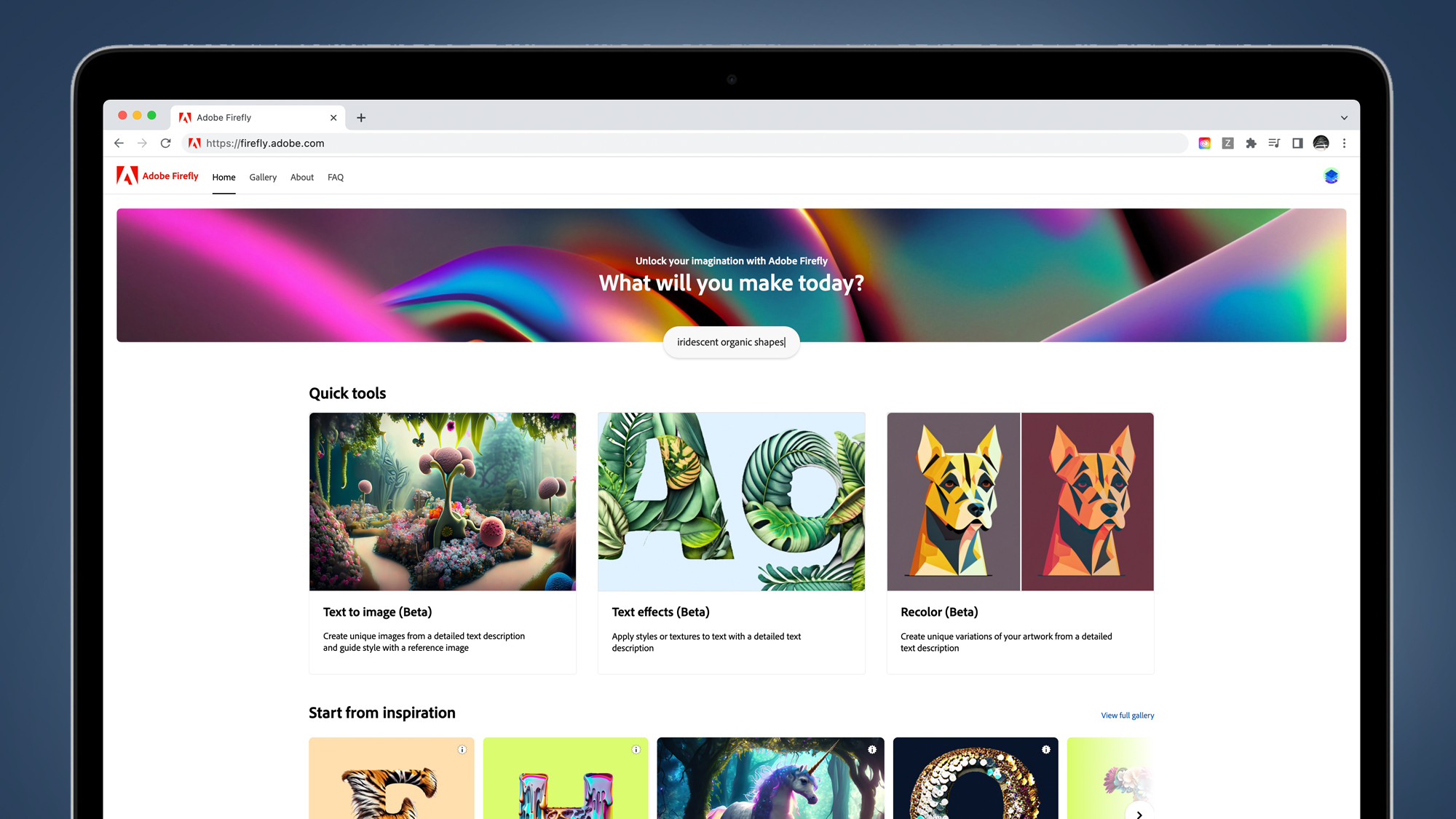

The giant behind apps like Photoshop and Illustrator has been baking AI image generation into its software for years, but Adobe Firefly takes it to a whole new level. Its first Firefly beta brings text-to-image generation to Photoshop and gives you the ability to apply styles to text in Illustrator, among other skills.

A key difference from the likes of Midjourney and Dall-E is that Adobe Firefly is more open about the data its AI models have been trained on. Adobe says this first beta model has been trained on Adobe Stock images, openly-licensed content, and public domain content where the copyright has expired.

In theory, this makes it a more ethical alternative to rivals that have attracted class-action lawsuits from artists who claim that some AI models, including Midjourney and Stability AI, are illegally based on copyrighted artworks. While this is an understandable policy from a giant as big as Adobe, it isn’t yet clear what effect this will have on Firefly’s overall power and versatility.

Adobe is treading carefully in this space, with a Firefly beta sign-up now open. Signing up won’t necessarily grant you access to the new tools, though, as Adobe says that the beta process will be used to “engage with the creative community and customers as it evolves this transformational technology”. But the good news for amateurs is that it will be asking “creators of all skills levels” to contribute.

While it might be a while until we see Adobe Firefly’s new AI models rolled out across its full range of Creative Cloud apps, the early demos show that some fascinating, powerful tools are coming soon. In general, Firefly takes the usability and creative potential of its apps to new heights, thanks to the ability to simply describe an image, style, or text effect you’re looking for.

The first apps that’ll benefit from Firefly beta are Adobe Photoshop, Adobe Illustrator, Adobe Express, and Adobe Experience Manager. And Adobe says this Firefly beta is just the first AI model in a family that is in the pipeline, with all of them likely to be integrated into Creative Cloud and Express workflows.

So what exactly is Adobe Firefly right now and how does it compare to the best AI art generators? We’ve gathered everything you need to know about Adobe’s AI milestone in this guide, which you can navigate using the shortcuts on the left.

Adobe Firefly: how to sign up and release date

You can apply to be an Adobe Firefly beta tester right now. It isn’t yet clear how many people will be granted beta access, but Adobe will use the process to fine-tune its models before fully integrating them into applications.

Adobe also hasn’t yet revealed how long the beta process will be, but says that it’ll use the period to “engage with the creative community and customers as it evolves this transformational technology”. The speed of its full rollout will likely depend on how successful this beta period proves to be.

Adobe Firefly: which apps is it for?

The first Adobe apps to benefit from Firefly integration will be Adobe Photoshop, Adobe Illustrator, Adobe Express, and Adobe Experience Manager. These will get new tools like text-to-image generation, AI-generated text effects, and more, which you can see in action below.

But AI tools are coming soon to other apps, too. For example, Adobe previewed a feature in Premiere Pro that’ll let you change the season and weather of a video scene, simply by writing the request in a text box.

Video editing is about to get a lot more powerful and user-friendly, although it isn’t yet clear how quickly Adobe plans to roll out the betas for this next wave of Firefly tools.

Adobe Firefly: how do you use it?

We haven’t yet been able to use Adobe Firefly’s new tools, but we have seen them in action. And if they work as well as the early demos, they could have a dramatic impact on how Adobe’s apps work – and who uses them.

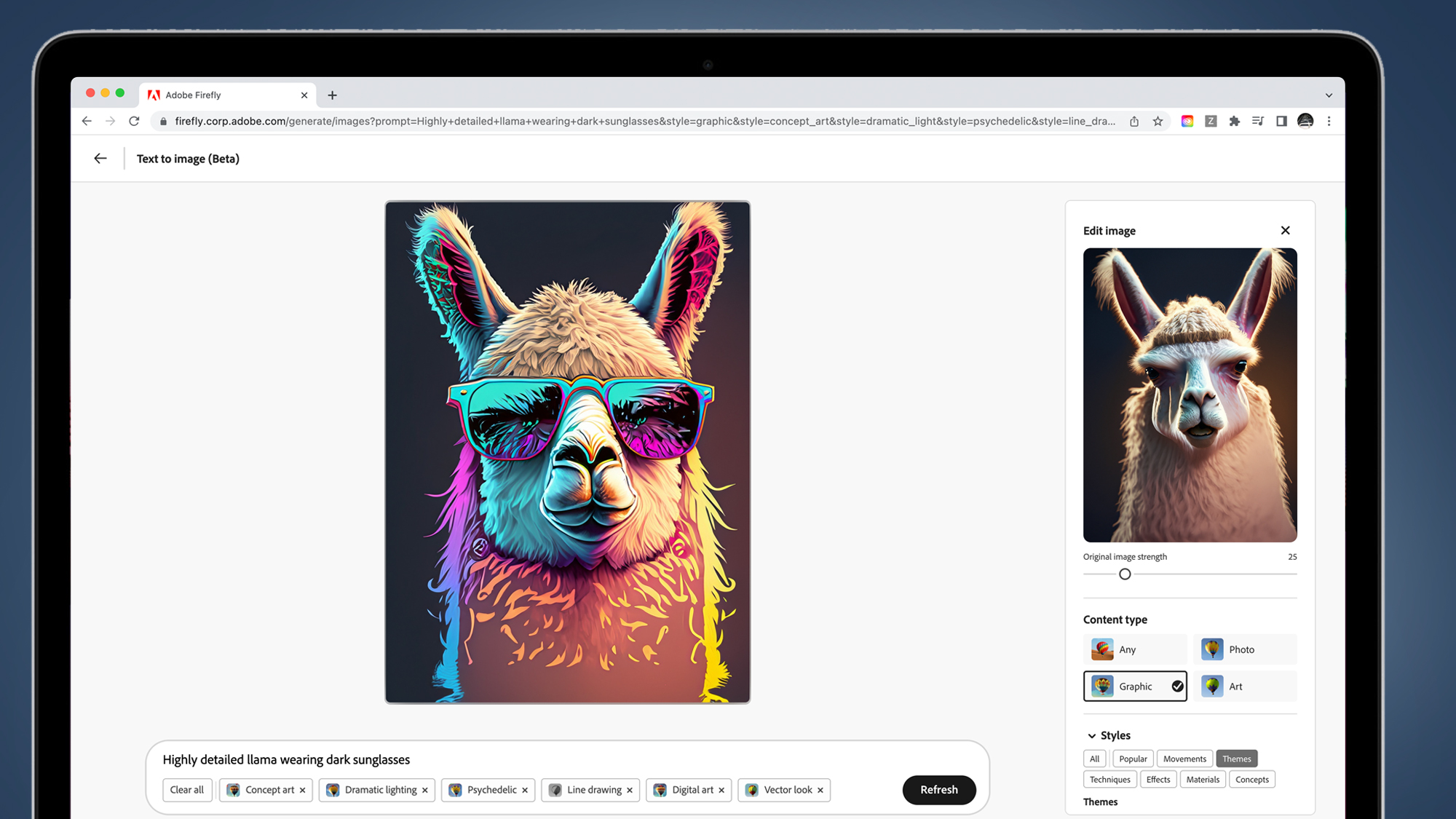

The most obvious parallel to AI art generators like Midjourney, Dall-E, and Stable Diffusion is the text-to-image user interface. Like its rivals, the Firefly beta will let you type a request into a box (for example, “side profile face and ocean double exposure portrait”) and it’ll produce an AI-generated example.

You’ll also be able to apply different styles using a menu that lets you choose between, for example, styling the image as a photo, graphic, or piece of art format. And there’ll be further tweaks possible from a menu that has options like ‘techniques’, ‘materials’, and ‘themes’.

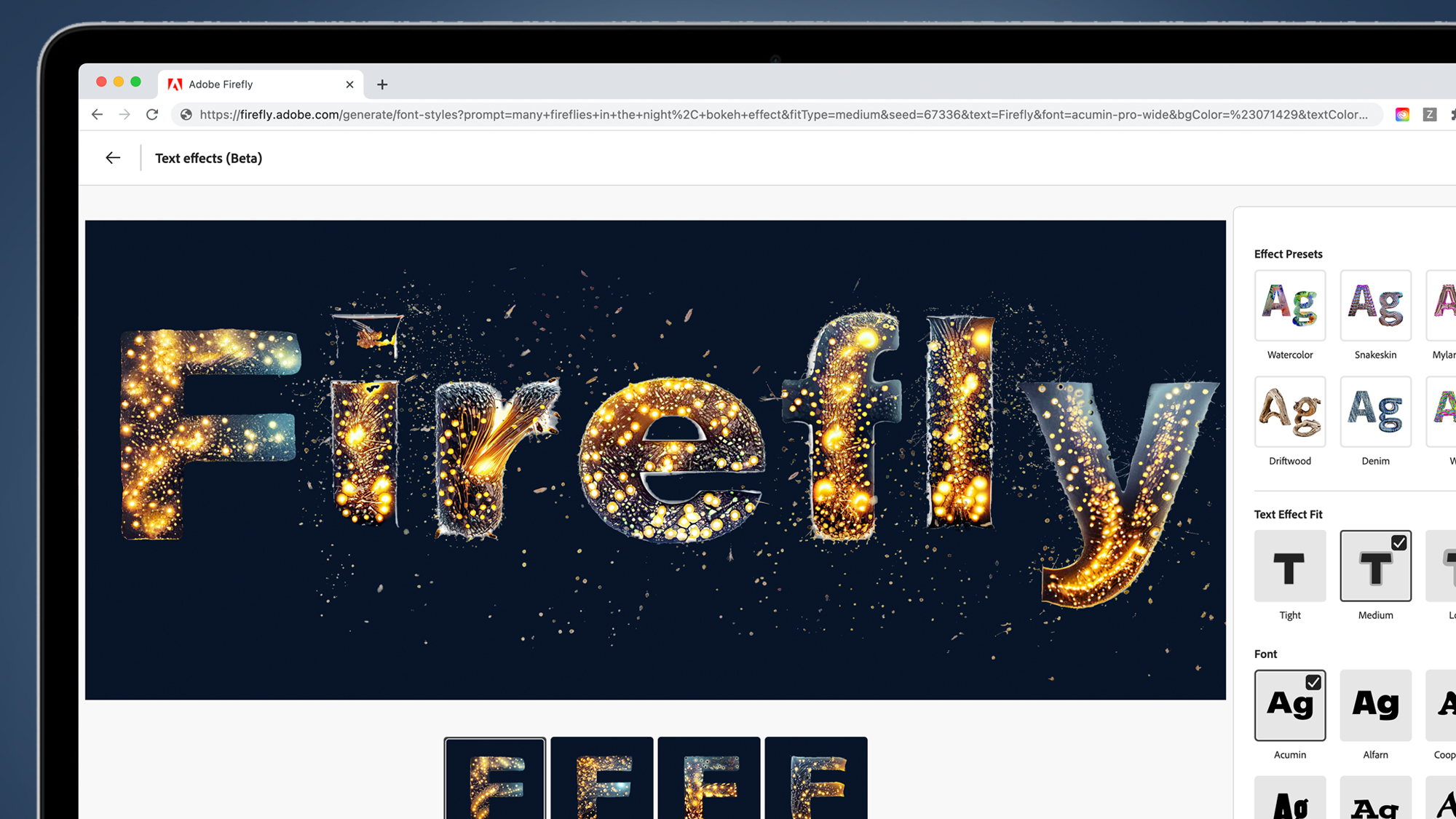

It’ll be a similar story with new AI text effects in the likes of Illustrator. For example, you’ll be able to type a specific prompt like ‘many fireflies in the night, bokeh light’ and the AI generator will cook up a font matching that particular description. The possibilities for marketing, social media, and more are huge, particularly for those with no background in digital art.

Looking further ahead, Illustrator will be able to take sketched fonts and turn them into digital reality, while Adobe Express will let you generate social media templates from simple prompts like ‘make templates from mood board.

Adobe Firefly vs Midjourney vs Dall-E

It’s a bit too early to make any conclusions about how well Adobe’s new Firefly tools work compared to the likes of Midjourney and Dall-E, but one area where it does differ is in the AI model’s training.

Adobe says this first Firefly model is trained on “Adobe Stock images, openly-licensed content, and public domain content where copyright has expired”, which means it’ll be available for commercial use without the potential threat of copyright issues. Other AI art generators, meanwhile, are embroiled in potential class-action lawsuits from creators who argue that they involve “the illegal use of copyrighted works.”

Interestingly, Adobe also says that it’s planning to “enable creators that contribute content for training to benefit from the revenue Firefly generates from images generated from the Adobe Stock dataset”. Exactly how Adobe plans to do this hasn’t yet been decided, though, so while the intent is laudable, we’re interested to hear more details. The company says it “will share details on how contributors are compensated once Firefly is out of beta”.

Similarly, Adobe says that one of the broader goals of its Content Authenticity Initiative (an initiative that includes members like Getty and Microsoft) is the creation of a universal ‘Do Not Train’ content credentials tag for images, which would allow artists to exclude their creations from being part of an AI image generators training. Again, while this is a promising development, it’s currently only at the “goal” stage.

Adobe Firefly: how good is it?

Adobe Firefly is clearly a huge moment for its creative apps – and anyone who relies on digital tools like Photoshop, Illustrator, or Express.

While many people already use AI-powered Adobe tools (like Photoshop’s Neural filters), Firefly could open them up to a whole new audience – all you need to do is describe anything from images to illustrations and video and the software ‘co-pilot’ (as Adobe likes to call its AI) will give you a helping hand.

Of course, none of this is new, and the likes of Midjourney and Stable Diffusion have stolen a march on Adobe in getting AI art generators out into the wild. It also remains to be seen how much Adobe’s understandable attempts to make Firefly ethical (by restricting its training data) will impact its overall usefulness and versatility.

The likelihood is that Firefly will simply give existing Adobe customers some very useful new tools to dream up new creations, rather than attracting hordes of converts over from the likes of Midjourney. But we’ll give you our first thoughts once we’ve taken the Firefly beta for a spin, hopefully very soon.